Embodied social behavior in VR

Today I ran into a video that highlights something I’ve been thinking about for a while: if we want to use VR to tell (non-farcical) stories about characters interacting in 3D space, we need to figure out how to recognize and respond to embodied social behavior – things like body language, personal space, and contextually (in)appropriate physical actions for the current social practice.

My favorite part of the video is the bit from ~9:00-9:25. The player egregiously violates dozens of implicit social rules at once, yet there’s no reaction whatsoever from the game:

Crafting socially believable NPCs is already a huge challenge for non-VR games, but VR adds a whole new level of difficulty. Existing frameworks for scripting conversation and other forms of social behavior in game characters are totally unequipped to deal with body language and gesture.

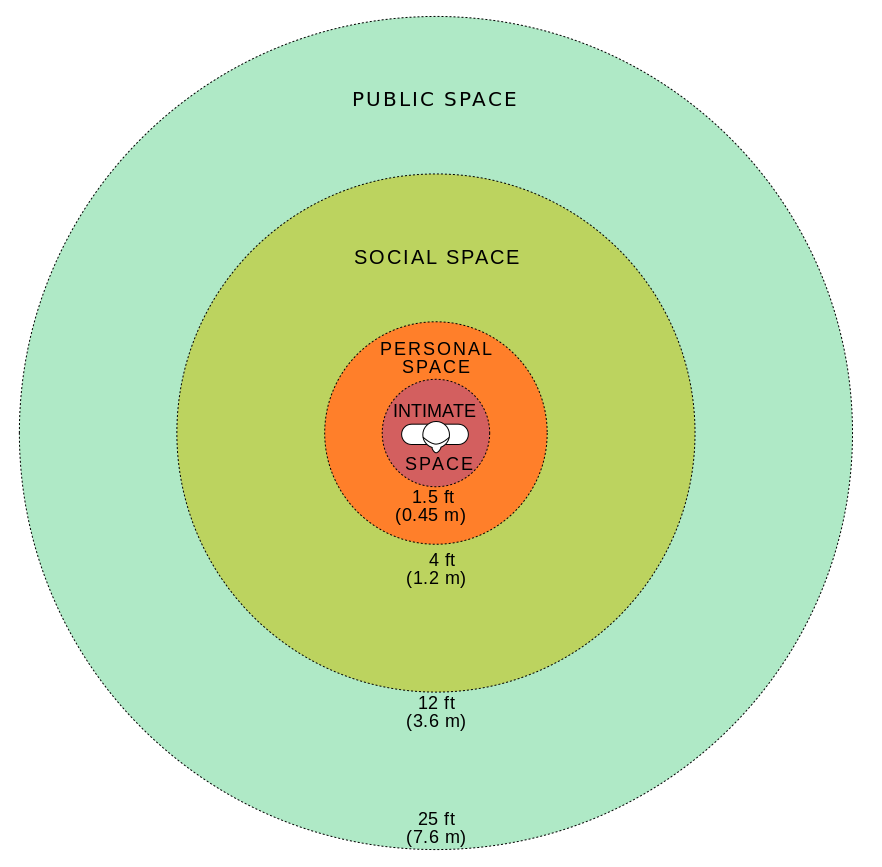

One potential research direction here is to construct computational models of proxemics – basically how humans use space in interpersonal interactions. Some of the VR projects I worked on at the Mobile & Environmental Media Lab actually dealt with proxemics to a certain extent! But the intersection between VR and proxemics is so huge that we were really only able to scratch the surface during my time there.

The term “social practice” that I used earlier in this post also points to another potential research direction. I first encountered this term in the context of Emily Short and Richard Evans’s work on the interactive fiction engine Versu, which uses the concept of social practices to model the context-sensitivity of social behavior on a much deeper level than I’ve seen anywhere else.

(This post was rescued from Twitter thanks to Jason, who tirelessly refuses to let me forget that I do in fact have an actual blog.)